I have spent the past year working on a backend gateway service that sits between external platforms and internal domain systems. While I cannot share the business context, the architectural lessons are absolutely worth sharing.

I had read about hexagonal (or onion) architecture before, but it never fully clicked. It sounded reasonable in theory, yet vague in practice. That changed once I had to deal with constant external change, inconsistent integrations, and the kind of mess that slowly leaks across a codebase if you let it.

This post is me writing down what finally clicked, using diagrams to make the ideas concrete.

Scope (and what this does not cover)

How hexagonal architecture works in practice

Where responsibilities sit in a gateway service

How this approach affects testing and change

Out of scope: framework-specific implementations, deep domain modelling theory, and business logic or event schemas.

The mental model that finally stuck

Before getting into details, it helps to anchor on the big picture.

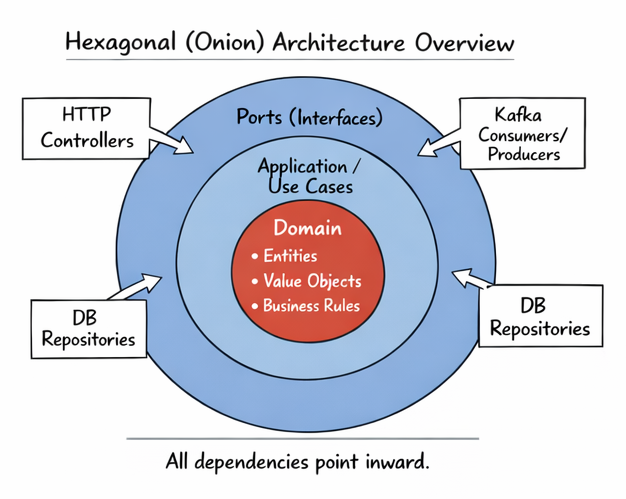

The key rule is simple: all dependencies point inwards. The domain has no knowledge of HTTP, messaging, databases, or external APIs. Everything outside adapts itself to the domain, not the other way around. Once I internalised this rule, many design decisions became much easier.

Why this matters so much for gateway services

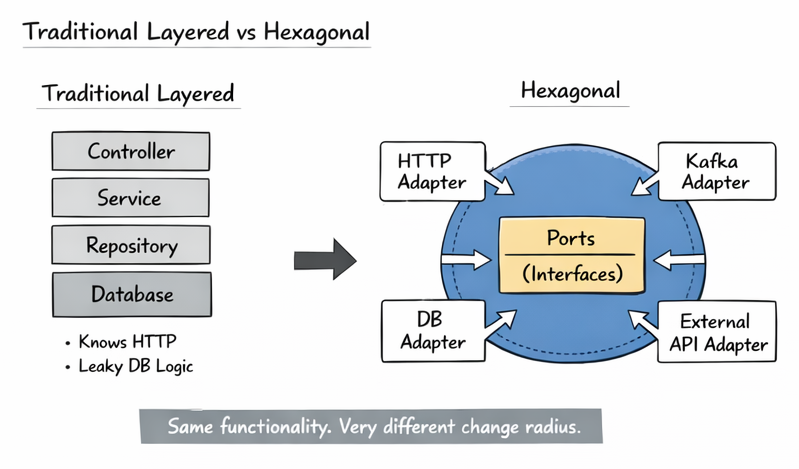

Gateway services are a stress test for architecture. They deal with external APIs you do not control, inconsistent payloads, retries, partial failures, and mismatched concepts. If your boundaries are vague, the mess spreads everywhere.

In the hexagonal version, HTTP, messaging, databases, and external APIs are just adapters. They do not talk to each other and they do not own business logic. The result is a much smaller change radius when something external changes.

How a request actually flows through the system

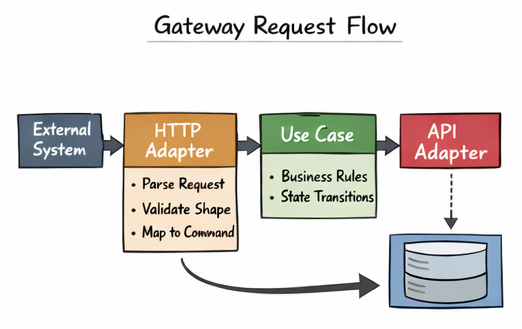

Architecture only becomes useful once you can trace a real request.

On the way back out, adapters translate the result into whatever the external system expects. The use case never knows who called it or how the response will be sent.

The inversion that makes everything work

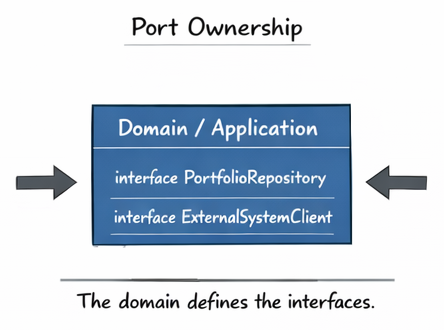

One of the most important lessons for me was understanding who owns interfaces.

This inversion of control keeps the domain stable and infrastructure replaceable. Swapping a database or external provider becomes an implementation detail, not a refactor. My test strategy improved immediately once this was in place.

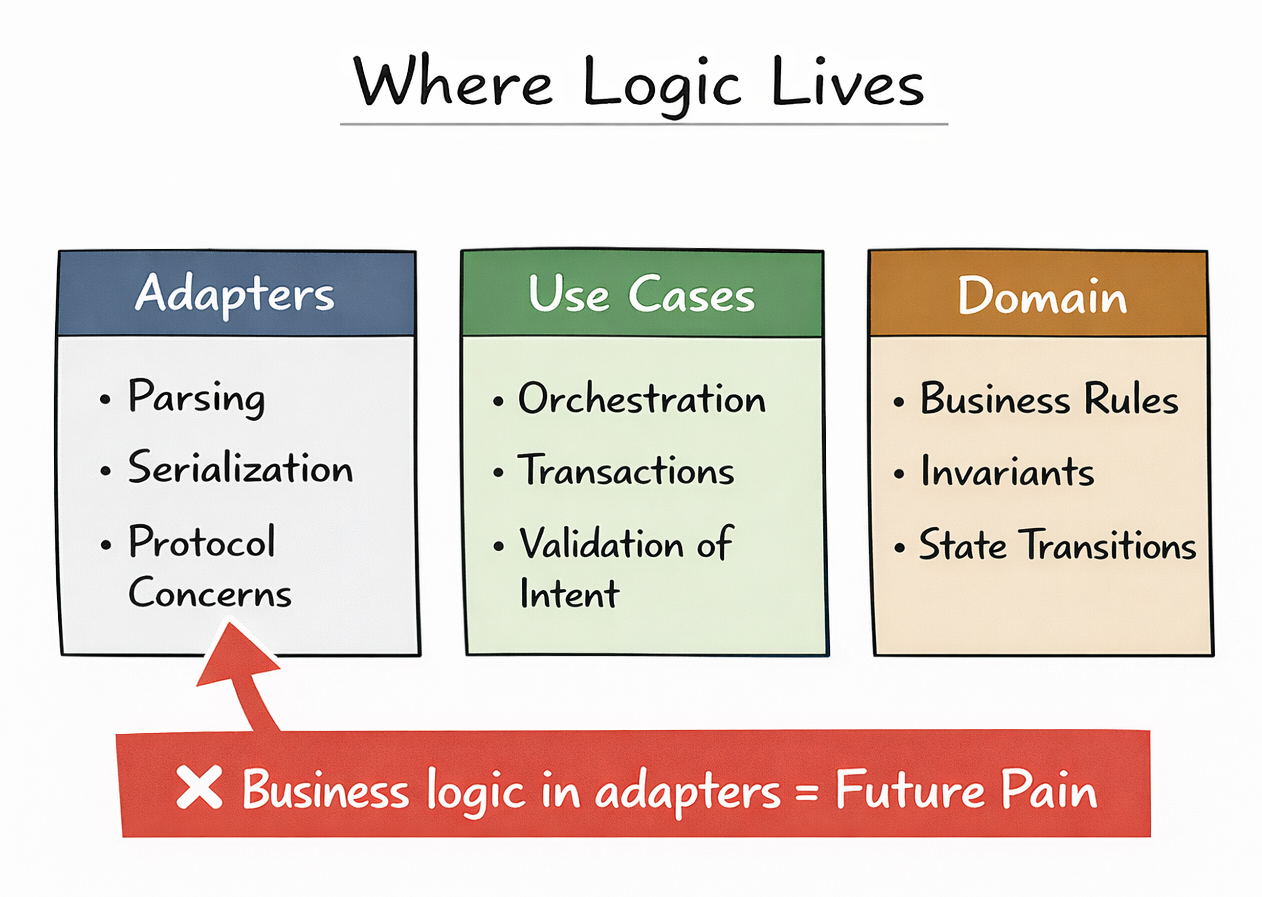

Where logic actually belongs

Any time business logic leaks into adapters, it becomes harder to test, harder to reuse, and harder to reason about. I now treat test pain as an architectural smell.

Things that surprised me along the way

Mapping turned out to be real work, not boilerplate. Converting between external and internal models is where assumptions surface. Hexagonal architecture does not hide this work; it forces you to do it explicitly.

I also learned that not all validation belongs at the edges. Structural validation belongs in adapters, but business validation belongs in the core. If something would be invalid no matter how it arrived, it should live in the domain.

When I would (and would not) use this

Gateway services

Integration-heavy systems

Systems expected to change over time

I would think twice for small, short-lived services or simple CRUD APIs with minimal business rules. This is not about dogma. It is about choosing structure that matches the problem.

Key takeaways

Protect the domain from external concerns

Let the domain own its interfaces

Treat adapters as translation layers only

Mapping is a feature, not boilerplate

Test pain usually signals architectural drift

Final thoughts

Hexagonal architecture stopped being academic for me once I saw it absorb real-world mess without collapsing. If you are dealing with integrations, change, or long-lived systems, the upfront discipline is worth it. Start by protecting your core logic from the outside world. Once that foundation is solid, everything else becomes easier to reason about.